Panmnesia Unveils Link Solutions for Agentic AI at ISPASS 2026 Keynote

Share

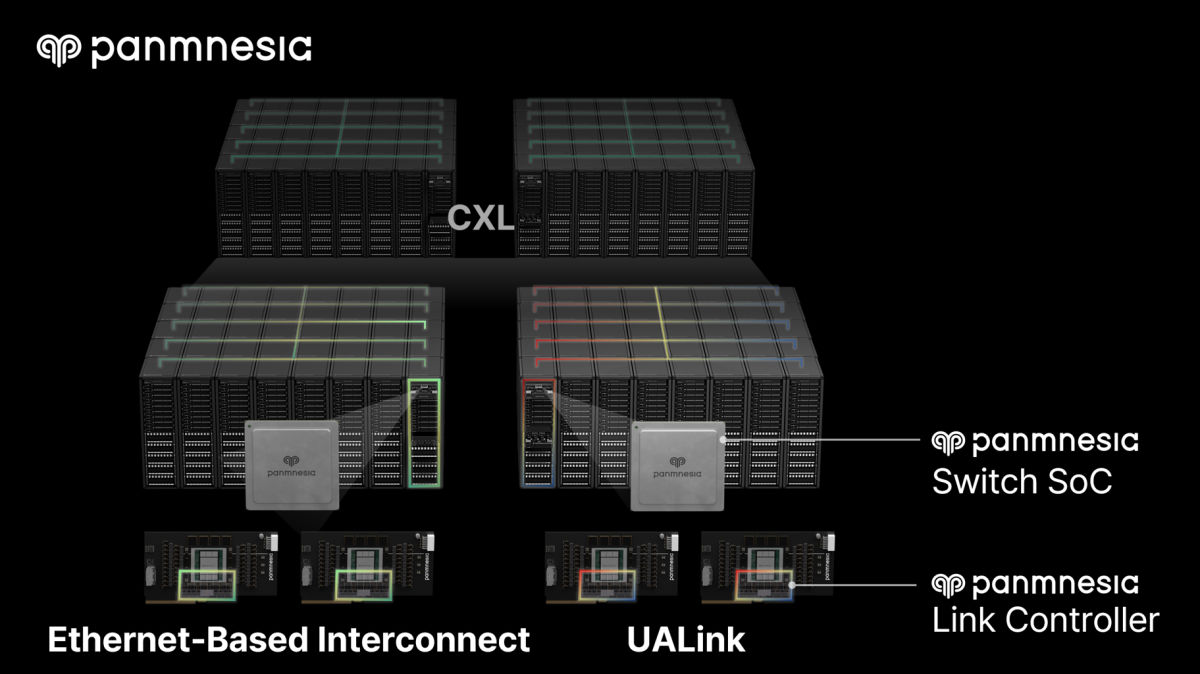

Panmnesia (CEO Myoungsoo Jung), an AI infrastructure link solutions company, unveiled its link solutions for agentic AI during its ISPASS 2026 keynote on the 28th, titled "From Generative to Agentic AI: The Memory-Centric Turn in Datacenter Design." The core of the proposal is to reduce data access overhead by storing and managing large-scale KV caches within a memory pool built on Panmnesia's CXL switches and controllers.

■ The Agentic AI Era: An Explosion of "Things to Remember"

In his keynote, CEO Jung covered the latest AI trends—including agentic AI—the challenges facing today's AI infrastructure, and the next-generation AI datacenter architecture Panmnesia proposes to address them, along with the link solution products that make it possible.

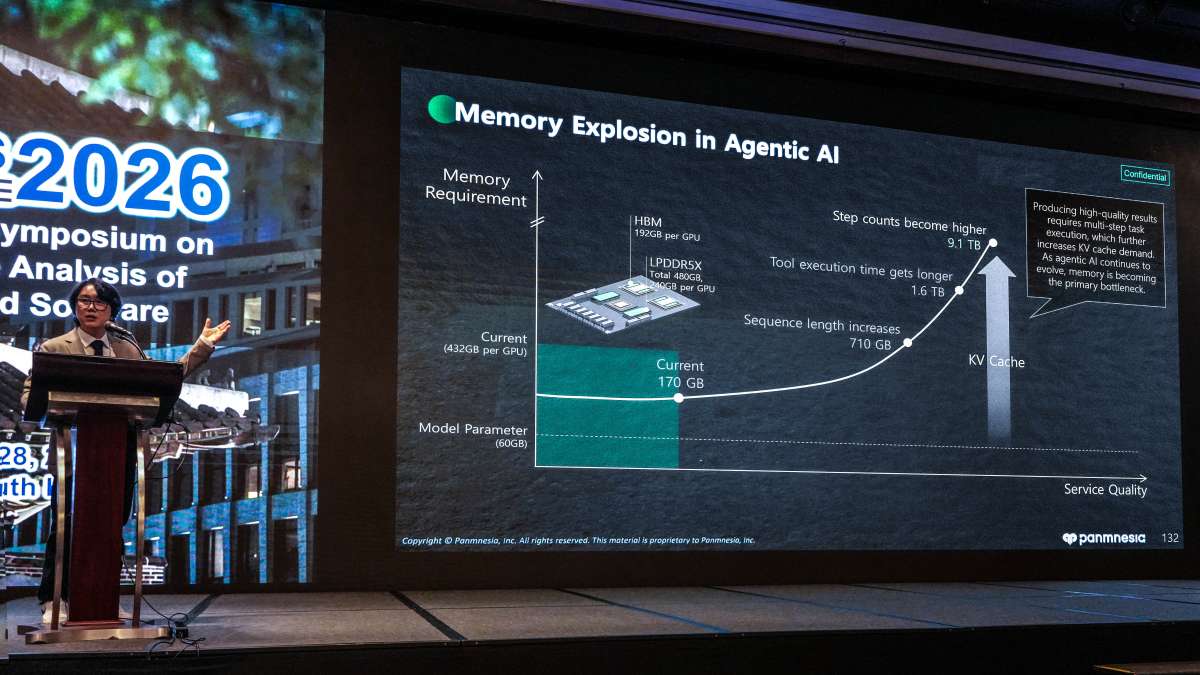

Agentic AI, which autonomously reasons and acts, has recently drawn significant attention. Unlike generative AI, which functions as a chatbot that simply responds to questions, agentic AI directly invokes external tools, decides its next action based on the results, and iterates its reasoning process until the goal is achieved. These capabilities have earned it recognition as a true "AI colleague" and driven its widespread adoption.

When generating new responses, both agentic and generative AI repeatedly refer to prior dialogue and intermediate computation results. This is where the KV cache (Key-Value Cache) comes in. The KV cache can be likened to AI's "short-term memory notepad." Just as memorizing the multiplication table allows one to recall "6" instantly upon seeing "2×3" without recomputing it, AI stores previously computed results in the KV cache and retrieves them as needed to accelerate processing.

The challenge is that agentic AI repeats inference multiple times to complete a single task, and the volume of context generated along the way grows enormously. As a result, the KV cache—the "multiplication table" the model must memorize—has expanded to a scale fundamentally different from that of conventional generative AI. Where and how quickly such massive KV caches can be stored and retrieved has therefore emerged as a central challenge for modern datacenters.

■ Panmnesia Addresses the KV Cache Bottleneck with Link Technology

Because the KV cache requirements of agentic AI far exceed GPU capacity, conventional datacenters have typically stored these caches in external memory or storage. However, this approach requires data to traverse Ethernet/InfiniBand-based general-purpose networks or storage interfaces, which adds significant latency. As a result, even when GPU compute performance is high, overall system performance can be limited by delays at the data access process.

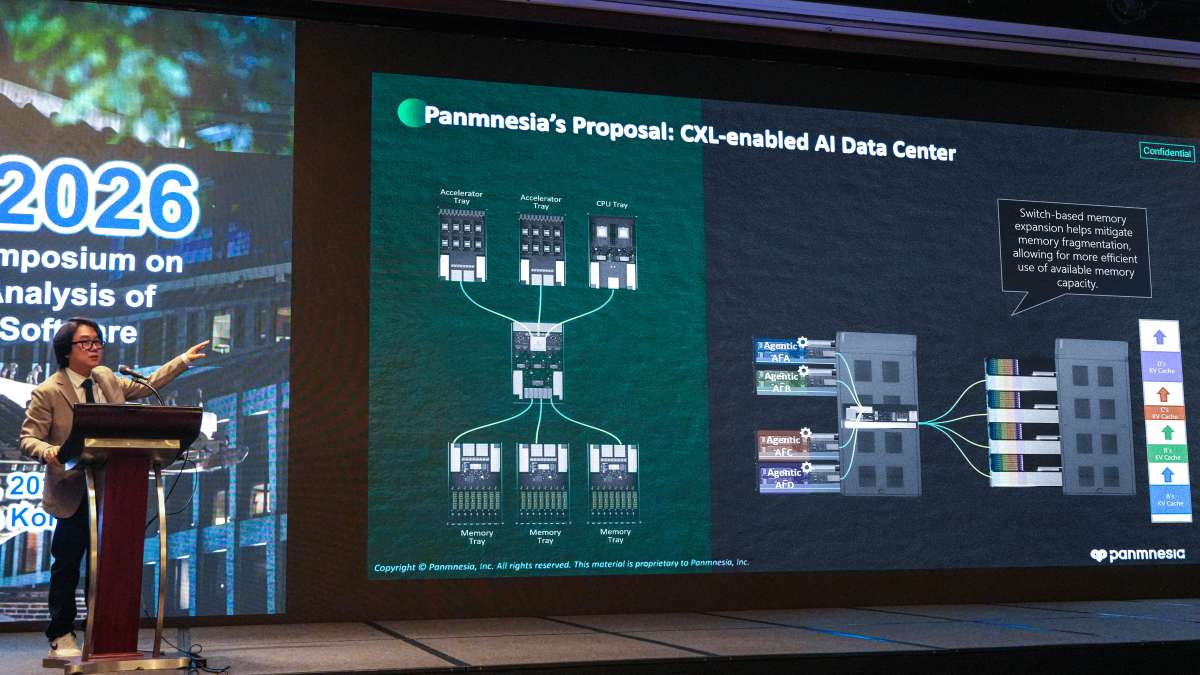

To address this, Panmnesia proposes a datacenter architecture that places the KV cache in a large memory pool based on CXL (Compute Express Link), an industry-standard interconnect for connecting system devices.

CXL is an industry standard for interconnecting system devices, enabling cost-efficient scaling by allowing only the necessary resources to be expanded. In other words, when memory runs short, capacity can be added on its own and only as needed—securing more memory at a reasonable cost. Unlike Ethernet-based remote memory access, CXL also enables direct memory access without software intervention, significantly reducing access latency.

Applying a CXL-based architecture to large-scale KV cache management can significantly reduce data access time compared with existing systems, improving agentic AI inference performance.

Panmnesia's Link Solution Products

To realize this datacenter architecture, Panmnesia is developing a comprehensive portfolio of link solution products on a full-stack basis—spanning intellectual property (IP), semiconductor chips, systems, and software. The ISPASS 2026 keynote also featured key updates on several of these products.

The foundational product is the PCIe-CXL Combo Controller, which can selectively operate as either a PCIe controller or a CXL controller. This controller acts as a kind of "translator" that enables system devices such as CPUs, GPUs, and memory to communicate with other devices through the PCIe and CXL protocols.

A key differentiator of this product is its low latency, achieved at the level of double-digit nanoseconds. According to CEO Jung, this was made possible by designing the controller in-house—optimized specifically for the CXL protocol and its characteristics—rather than relying on external IP. He added, "Because we develop our controllers and IP in-house, we can rapidly deliver customer-tailored solutions."

Jung also introduced the PCIe 6.4–CXL 3.2 Fusion Switch, with pre-release silicon currently available. A switch is a semiconductor that bridges multiple system devices, enabling them to interconnect. The PCIe-CXL Fusion Switch supports the connection of both PCIe and CXL devices. Panmnesia's Fusion Switch stands out on two fronts: ▲ it is the industry's only switch supporting Port-Based Routing (PBR), enabling flexible device-to-device connectivity, and ▲ it incorporates a proprietary low-latency controller to deliver minimal latency. Further details are available in the related article (https://panmnesia.com/news/kr/2026-04-16-panmnesia-pcie-cxl-switch-pre-release-kor/).

Panmnesia partners may request pre-release silicon of PCIe 6.4–CXL 3.2 Fusion Switch. For more information on pre-release silicons, products, and partnership opportunities, please contact sales@panmnesia.com.